First and foremost, I want to express my sincere gratitude for your response and especially for the video you provided. It was incredibly helpful, and we truly appreciate your effort in helping us.

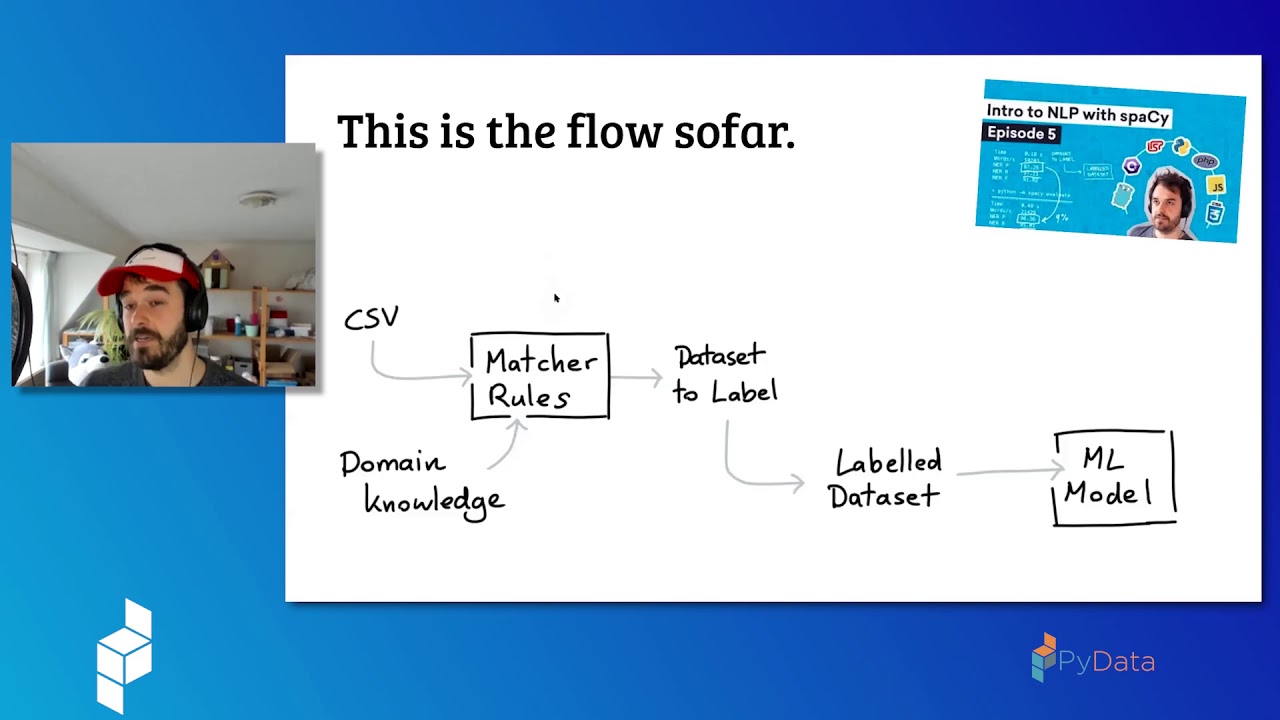

To provide more clarity on our project, we have been using the en_core_web_lg model to train a system that identifies persons and organizations. Our goal is to optimize the model for our use case by adapting it to our domain. Unfortunately, our data consists of emails (including headers) from the Enron Dataset, as client information is too sensitive. As a result, a significant portion of our training and evaluation data is not comprised of full sentences, and the majority of it is located in the header section. We expect the model to perform poorly on the headers, but we've also discovered that the model has difficulty identifying persons in some of the easy sentences located in the email bodies.

Our dataset contains up to 1000 entities in our dataset, with each category consisting of roughly 50%. For instance, out of 1000 entities, 505 are persons and 499 are organizations. It's worth noting that the smaller entity number is a subset of the higher entity number. If we have 600 entities, all of these entities are included in the 1000 entities, and we have 400 new ones. Each entity is unique, but they can appear more than once.

We trained our models on these entities and then tested them on a dedicated validation set that we never used for training. The validation set consists of 200 entities, with categories also comprising approximately 50%. We implemented our own validation logic, where the strings must be identical, annotated with the same location and elements in it.

Please refer to the attached picture. The first image shows our annotated gold standard, and the second picture displays the model performance after training on top of the existing persons/org tag of en_core_web_lg with 1000 entities. Grey color indicates true positive values, while red color indicates false negative values. As you can see, the model did not identify the name Jan Butler.

We stopped training early, with a max_step_size of 110, because we initially tried multiple combinations of training hyperparameters on 600 entities, and the max_step_size of 110 and learning rate of 0.003 yielded the best results. This does not necessarily mean that these parameters are the best for fewer or more entities, but we did not want to change too many settings when comparing the model progress.